UX Research: book notes

Notes on the O’Reilly book UX Research by Brad Nunnally and David Farkas

Somewhat helpful as a field manual, somewhat helpful as a guide to selling the esoteric commodity, this is a decent book with some good content, mostly around interviewing.

Why Do UX Research?

There is a good reason that research exists in the social sciences.

I think of it like this: there exist a number of researchers who have studied a particular group of people for a while (let’s say Inuits). These researchers have, by whatever means, learned about Inuits and want to share their findings because they think there is value in developing a deeper understanding of Inuit life. Quite often, this happens is in the context of a larger academic objective, such as Anthropology or Ethnomusicology, where knowing something specific helps others understand both very general things and how to approach other very specific things. Usually a key objective is to spread knowledge of Inuits to members of white empires who take actions impacting Inuits, but are quite ignorant of Inuit realities. But really, each researcher has their own motivations and most will usually do anything the can to fund their work’s continuance.

Similarly, in business, there exist people who know a thing or two about users and ought to develop that knowledge so that a large and powerful institution doesn’t run roughshod over real people. In business terms, learning about key groups (customers, clients, distributors, etc) can help boost revenue (sell more product), improve strategic decisions, and reduce costs.

Given this framing, researchers who know about Inuits should share what they’ve learned by lectures, workshops, publications, and the like. People who find Inuit life interesting should get involved in it any way they can, by visiting, watching documentaries, going to cultural events, etc. To transition into a productive researcher, the learner ought to become a student, soaking in the outputs of researchers before them (lectures, workshops, etc), then find new questions no one is really sure how to answer, propose new perspectives, and look for relevant cases where institutions that researchers can influence come into contact with Inuits. At this point it becomes genuinely productive to have these researchers go “in the field” and spend time with Inuits.

In practice, businesses are not this thoughtful and behave much more like trendy consumers, spending money on things to help them save (or gain) face. Substance is distinctly secondary to appearances and looking like a winner to a mainstream audience is the key to success. When a manager finds research results they don’t like, they can attack the methods to effectively kill the finding. When they have a conclusion they’d like to see, they can say there is “some” evidence for it, even if the evidence is terribly weak. Businesses funding UX research rarely have the follow-through to ensure that any findings be effectively passed on to others or recorded for future use. Sharing findings with anyone outside the company is never even considered.

Of course this behavior is quite reasonable if you imagine that the business is run by a bunch of individuals who have day jobs so that when they get home they can afford cute shoes and nice vacations with loved ones. Even if they drive the company into the ground, leaving for another job is often a comfortable move that adds some spice to life anyway and is probably a good career move.

Despite this, I think there is a basic value to UX research that should be clear from the Inuit example: pass on knowledge and develop it so your organization behaves less stupidly. To me, most of the possible benefit of UX research can be realized by simply putting in the time learning from those who already know the users, getting things down on paper for future learners, and conducting research as little as possible given a reflective consideration of what can already be gleaned from available information. Many times I have done considerable research to unveil some surprise that a couple old-timers in sales find obvious. “Well if you knew that the whole time, why didn’t you share it with anyone?!” — I screamed silently at my desk. (Poor incentives.)

Nunnally and Farkas, with seemingly no knowledge of academic research, pitch the typical capitalist ideology that “improving a product” will make the world a better place, because more people will choose to spend more money on it (which means they must like the product and are relatively more happy). In some of this book’s lovely sidebars representing the schtick of various consultants and thought leaders, UX research offers transcendence for business by revolutionizing products and business models. UX research can help your business address what people need and not just what they want, allowing game-changer status (transcendent) innovation . UX research will “look beyond the symptoms and determine the underlying nature of the disease” (p 71).

What’s In This Book

Nunnally and Farkas cover things to do before you begin research, logistics around scheduling (certain forms of qualitative) research, a general outline of research methods (qualitative and quantitative), some clever tricks for interviewing, and a few good rules of thumb for what to do to finish up a bit of research.

Before Research Begins

Before agreeing to a specific research project, it’s often worthwhile to get a broader perspective on what users are experiencing and what they can get from competitors.

To get a quick glance at existing user experience, you can usually find quite a bit of substantial content by making a simple Heuristic Evaluation of the product you’re trying to improve. You simply go through the entire product and note anything that look a bit dodgy, categorizing them into a few different types of problems, and indicating their severity. I’m a big fan of this method, especially for new hires, because it puts problems into perspective and shows how much UX work there is to do before adding any complexity of new research. (In my experience, a heuristic review usually reveals a few months worth of UX work that you could dive in on without any research.)

Choosing methods of research is always tricky because there are no set rules for how to do this right. Nunnally and Farkas have a very nice exercise. First determine some questions that you’re trying to answer, then write down five methods you could use, with some notes for each on what answers you might hope to get from them (pp. 68–72). For each method that shows any promise, ask

- What sample size do we need for this?

- What budget does this need?

- How much time and effort will this take?

- Are you game to put in that much time for this?

With some good ideas on what questions you can answer with what methods at what costs, start talking to stakeholders early. You’re seeking buy-in here and you’ll get it if you convince the stakeholder that your work will help them. If the stakeholder’s unit ignores users from the start, you’ll need to work to change this. Early talks can also set expectations, so that stakeholders can be realistic about how unimportant your product is to your users.😭 As you go, be sure to include them intermittently during research so they see your decisions as understandable and not baseless guesses or simply wrong (p 61). Ultimately, you are warming them up to appreciate your findings, whatever they may be.

Research Logistics

My least favorite part of UX research: in order to get any good findings, you’ll need a large amount of setup work to recruit participants, schedule things, book rooms, get access to relevant data, etc. I had a job interview where the manager told me that hiring a UX researcher meant they would also need to hire a recruiter, and then they may as well have 2 researchers. I think this is pretty much right.

Recruiting

Recruiting participants for a study requires knowing what kinds of users you want to talk to. Nunnally and Farkas recommend segmenting users by

- terms developed in past research

- categories used for current analytic data

- customer segments used by other parts of the business, such as marketing

- existing personas or profiles put together by your or others

For each subgroup, find three to five users (p 97).

If there is a screener from previous research, you might also start from that and ask “what is missing or needs to change?” The better your screening, the better your final data.

Recruiting people within your company can be easy, unless managers block you from accessing their people. To get around this, start talking with those managers early in the process to get their buy-in. As discussed above, you need to find a way for them to believe that they can benefit from your research.

Recruiting from outside your company is slow and draining. Cold calls are hard. Intercepting people in person is hard (though the authors recommend carrying a clipboard to get more participation). Online intercepts works (you know the pop ups that asks if you’d like to participate in UX research for this site). Hire a recruiting firm because they are professional and will get the job done. However, they will always find the easiest-to-find candidates who are often younger and less experienced in their roles (pp. 100–103).

I especially loved the sage advice in this book that, no matter what, you’ll have no-shows and duds. Such is life. People have busy lives and showing up for some random study is never a high priority. (In college I signed up to participate in psychology studies and definitely slept through at least one. It was literally the least important thing on my schedule.) Similarly, a dud is someone who looked like the right kind of person to talk to (answered the screener right), but actually doesn’t help you answer your research questions. You can still extract some insights from duds if you improvise. (One time I interviewed someone who barely used our product and didn’t care about what it did, but I did get him to explain to me why, in his context, no one cared about our shit at all. This was very helpful.) Point about no-shows and duds: plan on them and recruit slightly more participants than you absolutely need.

Some businesses struggle with recruitment. The book responds to a few of these cases:

- “We don’t have any users yet!” — study users of a competitor’s product

- “Target audience too small!” — find people who would know the sort of people you need and then work from there

- “Our product is still in stealth mode!” — make participants sign an NDA (p 106)

Opening Matter in a Script

Any time you conduct research with live participants, there are a number of things you need to cover before you dive in to the planned activity.

- Who we are

- Project background and the reason for this research

- Why your input is valuable

- A disclaimer that they can bail at any time

- How long the session will be

- How they will be compensated for their participation (p 88)

- Quick review of the screener: we chose you because you said you were an X kind of person who did Y behavior

- Signatures for consent form, NDA form

- Ask for permission to quote them (pp. 86–87)

It’s a major slog to handle all these things and keep the mood up; make this part brief and keep your tone encouraging.

Qualitative Methods

The author’s list of methods is roughly the same as what you’ll find anywhere else UX research is discussed.

In a sidebar, “voice from the streets” Kyle Soucy describes a method she calls Collaging wherein researchers show a bunch of images to participants and ask them to ask which images represent how they feel about your product, or some aspect of the user experience. It’s a technique from marketing research and is entirely attitudinal, but I thought it was a fresh idea and liked it. I imagine you’d need very supportive stakeholders for anyone to accept the findings (pp. 158–159).

Other methods such as card sorts and user testing get short shrift in this book and instead Nunnally and Farkas go into terrific depth on interviewing techniques, which could also be used in contextual inquiry and some other methods.

Interviewing

Interviews can help with the “why” and “how” behind user behaviors. But are they uncovering attitudinal or behavioral data? The book ignores the extent to which the ego creates a socially desirable narrative out of the mess of real actions, but basically people will always lie, including in interviews, because they want to project an image of coherence and goodness, saving face instead of telling the truth. As noted earlier, the purpose of this book is to help in the workplace, where truth is often unimportant anyway.

The real point of an interview is to learn everything you can from the participant. This book says the participant is the teacher and the researcher is a student, which is a helpful rule of thumb for understanding how to conduct yourself in interviews. The student does not get to judge the teacher and should remain neutral and appreciative of anything the teacher has to share. It’s natural to get excited about some topics more than others, but remember that this will influence the interviewee’s behaviors too.

You’ll need a strong script, but also one that gives you enough flexibility to move away from it. (Traditionally there is a distinction between a “structured” interview, where we do not depart from the script, and an “unstructured” one where anything goes. Here they recommend a middle course, which I have also found the most practical approach.)

Typical things to talk about in a UX research interview include:

- Process: how do you do xyz? Walk me through that process

- Explanation: Can you explain that a little bit more?

- Description: Can you tell me what you’re seeing?

- Timing: When do you use xyz? What specifically causes you to do it?

- Frustrations: What do you do when you can’t xyz?

- Ideals: If anything was possible, how would you do xyz?

To build a script, it’s great to brainstorm every question you have, every topic you could talk through, and every way you can use a question to lure out an answer. However, you will also want to eventually take another pass and challenge each question and consider if you really need to ask it.

To make the script run smoothly, practice it. “Repeatedly asking questions to friends and then asking them how hard they were to answer helps me. At the end of the day, I’ve succeeded when our questions both surface interesting questions and don’t put an enormous burden on the interviewee.” (p 26) Practice your script with a co-worker or a friend to make sure it flows smoothly.

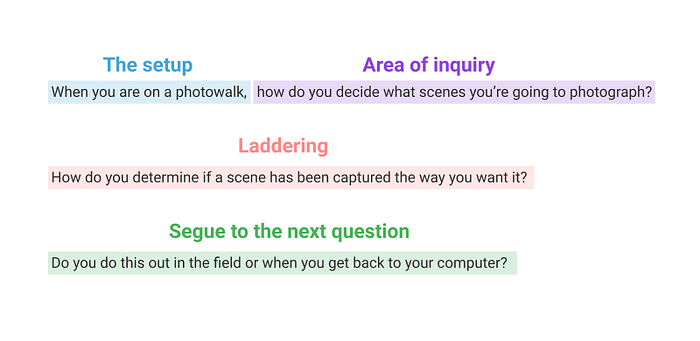

The basic structures of your script can be seen as setups, areas of inquiry, laddering (which gets you deeper), and segues (to keep it feeling conversational) (pp. 23–24).

All this talk about structure is helpful, but do remember that the point of your interview is to learn stuff and that often happens best when you go off the script to dig into what is most interesting (relative to your research questions).

To learn more from a person who does not realize they are an expert and is not very motivated to teach you, it can help to give the interviewee “handles” to answer your (big, abstract) research questions. Colin MacArthur, a researcher specializing in UX for government explains, “a common tactic is asking people to think about a recent, significant life change. As they describe what happened, interviewers ask full-up questions that focus on what we’re interested in (interactions with the government).” (p 26)

Generally speaking, leading questions and shallow questions are bad for interviews. A leading question can direct the participant to try to support an idea that they might not really believe in personally, such as that your product is good. A shallow question can give you a shallow answer, like when you ask if they use the product once a week or more. Avoiding these questions is a good rule of thumb.

However, the authors went a step further here and pointed out that you can use these kinds of “bad” questions strategically. A shallow question can help establish a rhythm in the interview of question followed by answer, so that the interviewee feels comfortable with this aspect of the activity and is ready to do more. A leading question can be useful to loosen someone up early on by making it easy for them to talk: “so are you pretty serious about photography?” The content of their answer is probably rubbish here, but it gets them going. Emotionally, they are feeling more engaged and, at the level of face work, they are now motivated to substantiate the claim you walked them into earlier (e.g. that they are serious about photography). A leading question can also be useful when you expect that their response will actually go in the opposite direction: “Is this the only product like this you’ve tried?” can get a better response than “Have you tried other products like this” because the interviewee is motivated to clarify and present their own side of the story, which you have pretended to miss. Sometimes you can give the participant something to disagree with and get them going that way (p20 -22).

The goal in an interview like this is to get the teacher to present what they know (that is relevant to any of the research questions) and explain it to the student (in a way that they can understand).

Roles During Qualitative Sessions

One person will be the moderator, presenting questions and helping the subject feel at ease and ready to answer questions to the best of their ability. The moderator should mirror the subject, adopting their style of speech, attitude, and body language. This is basic “bedside manner” but for research rather than medicine. The moderator’s goal is to get the interviewee in a flow state where answering questions is easy enough to be doable but hard enough to keep them engaged (p 119).

Moderating is generally considered the harder job and you can improve your skills here by learning from improv comedy and practicing small talk. Farkas in particular emphasizes skills from improv relevant to moderation:

- build up what the other person‘s story; don’t knock it down

- try to get everyone’s contributions to support a story you are weaving together

- if a question falls flat, see what you can still get out of it, even if it’s not what you were planning to get

- think of your responses as some version of “yes, and” so that you can build up the scale of the story (ultimately delivering larger insights”

- warm up beforehand with deliberate exercises to make you feel more comfortable saying the first thing that comes to mind and going with it (133–149)

Developing your abilities at small talk can also help. The authors recommend going about this systematically at the office, by making small talk opportunistically with people and cycling through them, so that you chat again with the same person every week or so, providing a bit of fresh content and keeping the conversation casual. The goal is to get better at making others feel comfortable and free to share with you (pp. 128–130).

Besides the moderator, it is quite helpful to have a second person as a note taker. This person can jump in sometimes, but mostly should record what the person says and does so that the moderator doesn’t have to (and can be more fully present in the moment). As a note taker, the sound of you taking notes can influence the behavior of the interviewee. Nunnally and Farkas recommend you use paper so it’s quieter or, if you must type, keep typing all the time even if there’s nothing worth writing down, so you don’t stress a delicate situation later on, when there really is something to write down!

Like most of the field, the book presents note-taking as a chore. However, in my experience, the notes that come from different note takers vary tremendously in value. There is an art to taking good notes. When taking notes, it’s quite valuable to

- point out contradictions where the interviewee said one thing in one case and another later

- note awkward pauses, nervous laughter, or deliberate pandering (when the interviewee is trying to make you like them)

- point out when what they say does not actually make sense, such as “I would need this feature” when it’s clear they don’t understand that feature

- include notes on your notes when the interviewee addresses a technical topic or uses jargon that others reading your notes later won’t know

- frame what you write in terms relevant to what you were trying to learn in the session

- note what was not said that you would have expected to be

- note misunderstandings they have about your product or the area of inquiry; that’s just where they are coming from and how they view the world, so no need to correct them

- note any other information available in the session that might be revealing (using Zoom for interviews these days, we often ask participants to share their screen…which often lets me see their top bookmarks)

Remember that, in research, you are not just trying to learn content from the participant; you are also trying to learn about the participant. Learning how they see a problem may be more important than their thoughts on how to solve a problem.

Like moderation, note-taking can be improved through practice. A company’s all-hands meeting is a good opportunity: try taking notes here to include not only what’s on the slide, but who is speaking, where the emphasis is, and what was not said. Write down every significant point. A week later, review your notes and see which parts you can no longer understand. Those are areas where your note taking is insufficient and you are losing data.

Both moderator and note-taker need to respect some basic formalities in research. Dress appropriately if visiting an office versus a home. Be a gracious guest by respecting personal space, arriving on time and leaving on time too, leaving no trace, and giving assurances that they are in control. You can go “off record” any time and they can stop the interview at any time.

It’s ok to have one or two additional observers, but any more than that tends to ruin the dynamic (p 113).

Body Language

Nunnally and Farkas point to a few basic rules of body language that are worth respecting. Interviewees receive information from your body’s position, so do respect this and at least don’t send them the wrong message.

Don’t fold your arms or appear agitated, bored, or disappointed. Don’t look shocked or disgusted unless you want to convey that message to the interviewee.

Do lean in to show interest at appropriate times, keep arms and shoulders open, and mirror the participant’s body language (pp. 153–159).

If you are working with a participant from another culture or in another country, you should learn about local customs and social norms, as these often impact body language (pp.162–163).

Quantitative Research

One book cannot cover all topics and this one made little attempt when it comes to quantitative research.

The authors present the usual list of methods with a few interesting touches:

- System Analytics

- Surveys

- Card Sorting

- “Tree Jacking” which means asking a user to navigate through categories and subcategories to find a thing

- Eye Tracking, which is not a focus of this book

- A/B Testing, which might be better considered a half-method as it only works in conjunction with another method such as interviews or web analytics

I want to point out that “system analytics” is a very broad category including what I consider the most useful and accessible information about user experience.

Web analytics are essential for basic facts about what platform users are working with such as Android vs iOS, Chrome vs Edge, and display size. It’s good to know what pages get the most visits, but the meaning isn’t always clear. Contemporary tools that track cursor movements and clicks are great. They allow you to see exactly what people are doing with a product and often what they read, where they go to try to do things, and what wrong turns they make.

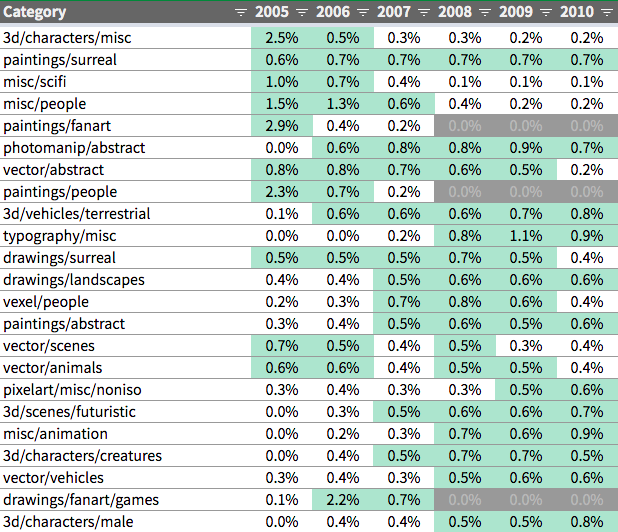

Usage analytics, the backend data which UI actions ultimately manipulate, are especially important for UX researchers who want to know what people are doing with a product. Reading through this data and developing an intimate knowledge of it is a precondition to producing simple representations such as charts, histograms, and average counts. For example, for product design around comments, read hundreds of comments. If you can see what people have actually written, you can begin to get a feel for patterns. These hunches can become hypotheses that drive studies and the studies can give you simple numbers and charts to share with others. However, the first-hand knowledge of what real people have actually done with your product is quite important, just as participating in interviews and listening to recordings is quite important beyond whatever summary findings are made from them later. This kind of plodding “case study” style research also helps you figure out how to clean up data, because you will learn about the junk that accompanies meaningful content. (For example, seeing data from accounts that were just setup for a demo helps you recognize these and exclude them from future research.)

The book advocates for data-driven design or “making design decisions with actual data” (p 64). Quantitative research can function as evaluative (as in most A/B testing), generative (as in finding unused features or unvisited pages), or insight-creating (as in exploring survey data about possible product changes) (pp. 36-37). In my experience, most organizations are interested in evaluative research of this kind but make little time for generative research based on system analytics. Instead, research pointing to new ideas tends to come from sales and other groups who can point to significant revenue or cost to go chase after.

Finishing Research

“As you start to hear the same information again and again, you have conducted enough research.” (p 57)

At some point, it’s time for analysis and some presentation of your findings to colleagues.

The authors suggest you budget three hours of analysis for every one hour of raw research, which sounds reasonable. Often it’s good to put your findings into a spreadsheet for final processing, whether to count who got lost in the navigation or how long your users spent on a task. Affinity mapping is a well-known UX research method, essentially making an atom of each individual noteworthy point you encountered and then grouping them into categories. There is certainly a lot of skill to good affinity mapping, and it’s unfortunate this book didn’t dive in deeper on this very common UX practice. (I’ve found that most people are bad at affinity mapping because they make groupings that aren’t the right scope or aren’t useful.)

The book also suggests SWOT analysis, putting research insights into a quadrant of “Strength”, “Weakness”, “Opportunities”, or “Threat.” Word clouds are good for turning a large corpus of words (such as parts of many interviews, or free-form survey responses to one question) into an easier to read map of “things that come up a lot.”

Analysis is important so that you digest what you’ve found in research, learn from your learnings, and ultimately are ready to share findings with others. The goal here is to transition your organization from a place that makes decisions based on mere opinion to an organization that makes decisions based on opinions referencing research (p 195). I am skeptical that this transition really happens most places, but it obviously makes a place for someone to do UX research that will benefit users, so it makes sense as a goal.

The book has a few good ideas about sharing insights. I used to see sharing insights as explaining things to someone who cares, but I now see it as producing ammunition for future arguing amongst management. Some solid tips:

- Your actual insights can just be a text file

- An executive presentation should be less than 10 slides

- Make multiple versions of your presentation to reach more people (consider a Slack post, an email, something for a newsletter, and a slide deck)

- Keep it simple so that more people will learn from your research before they tune out (p 199)

- Answer “So what?”

- If you have video, a highlight reel can help

- Drawing out user flows, click maps, and personas can give you stronger deliverables that influence future decisions at your organization

- Try to remove yourself and your agenda from your deliverables as much as possible — if it feels like you are using your research to prove a point you’ve already made earlier, it won’t feel safe for others to have opinions referencing research

I am always surprised at how little others in the business care about research findings, but it is a reality. I recorded videos of ten users in our web app and shared them with our Front End dev team, who had never seen a user in the app. Most of them never watched even a single video. Really, what was their incentive? If I want them to put in the time to watch, I might want to edit it down to a highlight reel and add some music or intertitles to bring it closer to the kind of media that these people are used to consuming.

Moving forward, you can more deeply establish value for UX research (and influence decisions) by asking those who you involved earlier “remember when you saw a user struggle with X?” You will want to reference research artifacts to provide rationale for next steps. This encourages “opinions referencing research,” as discussed above.

To Sum Up

As the length of this essay suggests, there is a lot of good stuff in this book. In particular, I went light on the sections on improv and body language, which could be helpful in developing your soft skills for qualitative research. The bigger picture here is weak, and as a technical manual it’s not very thorough. Still, I think it incumbent on the blossoming researcher to study what others have learned, then go out and learn it all over again yourself!